A concrete grasp of the terms "intelligence" and "artificial intelligence" is required before we can determine if computers can truly be intelligent. An understanding of binary numbers and computer hardware would be beneficial to the understanding of this paper, but is not necessary. These concepts can be found in any beginning computer engineering textbook.

Computer scientists have proposed various criteria for deciding if a machine is intelligent. One of the most well known is Alan Turing’s famous Turing Test. In a room there are two computers at which one can type questions. One computer is connected to a human for the responses, the other to the computer to be tested. If, after questioning both, the subject is unable to determine which one is connected to the human and which to the computer, the machine passes the test and can be considered intelligent (Fetzer 3-4). Attempts to pass the Turing test have resulted in computer conversationalist programs like FRANK, a computer psychiatrist (Figure 1). As evidenced by the transcript, its responses seem hauntingly human in many cases, demonstrating that a current computer could possibly pass the Turing Test.

Another definition of machine intelligence is William Rapaport’s criterion of a machine’s understanding of natural language. Here the difficulty lies in the word "understand"; speech-recognition programs today seem to understand when they type to the screen what the user says and obey verbal commands. This comprehension, however, is very shallow and consists only of translation of sound waves to certain letters that make certain words. These words are then mapped to particular computer functions, thus simulating understanding (Fetzer 5-9). This simulation is very different from the human method of comprehension, where sound waves are processed in the brain to form images and concepts. Then the brain compares these concepts to previous information and uses creativity and logic to form new conclusions.

Although the Turing Test and Rapaport’s criteria are simple methods for determining the effective mimicry of a computer, they do not measure intelligence. In each case the tests measure only the results of the computer, not the process it goes through to obtain the results. Therefore, in order to determine if a machine is intelligent, we need to go back to the basic definition of intelligence -- "the ability to learn or understand from experience . . . use of the faculty of reason in solving problems" (Webster 600). This definition leads to two crucial areas where computers’ abilities are questionable – the creativity necessary for reason, and understanding. In both cases, the real issue becomes whether intelligence is based on results or processes, simulation or emulation.

Armed with a basic definition of intelligence, we can now outline what artificial intelligence is. James Allen’s definition of artificial intelligence – "AI is the science of making machines do tasks that humans can do or try to do" (17) – is inadequate. We cannot redefine intelligence simply because we are dealing with a non-biological subject. Artificial intelligence is, instead, exactly what the term denotes-- the attempt to create intelligence in a machine or otherwise unintelligent entity. This created intelligence must fulfill the fundamental definition of intelligence.

III. Creativity

Because there is no magic criterion for determining whether a machine is intelligent, we must look at specific examples of seemingly intelligent behavior, such as creativity, to ascertain whether the computer really "understand[s] from experience" and uses "the faculty of reason in solving problems" (Webster 600).

If computers could be programmed to be creative and imaginative, they would have tools besides logic to solve problems, just as humans use other faculties besides logic when reasoning. "Imagination is a pervasive structuring activity by means of which we achieve coherent, patterned, unified representations. . . . Imagination is absolutely central to human rationality" (Johnson qtd. in Dreyfus xxi). Thus, because computers must reason to have intelligence, and imagination or creativity is required for reason, computers must be creative to be intelligent. Countess Ada of Lovelace, the first computer programmer, seemed to think such creativity was impossible. "The Analytical Engine [a planned computer of the 1880's] has no pretensions whatever to originate anything. It can do [only] whatever we know how to order it to perform" (qtd. in Matthews 30).

How, then, can one explain the seemingly imaginative or creative behavior of several recent computer programs? One program can improvise modern jazz at the intermediate-beginner level, and another creates surprisingly artistic and unique images with no required input. Still other programs, starting from only a few basic ground rules of math, deduced mathematical concepts known to humans only within the last 300 years (Matthews 31-32). It is often difficult to tell the difference between the computer’s output and the creations of humans (Figure 2, facing page).

The apparent creativity of these behaviors, however, is based on random elements of the program constricted by bounds, not on the portrayal of emotions or breaking away from normal and accepted standards, necessary for true creativity. "All these programs are inherently incapable of breaking out of 'standard' ways of thinking -- the hallmark of the ultimate type of creativity" (Matthews 32). While they may simulate imagination, these programs have no understanding beyond interpreting what the programmer has told them to do.

Creativity in computers is not only impossible; it is also undesirable. Should a computer develop which did not follow its program, instead breaking away to entirely new and unexpected output, it could perhaps be creative. Given, however, that the very essence of a computer is its ability to follow human instructions, a machine that did this would no longer be considered a computer, but a malfunctioning device. In addition, could true creativity ever be achieved, it would undermine the fundamental qualities of computers that make them so useful -- dependability, honesty, and reliability (Seufert 53-54).

IV. Understanding

According to Robert Schank, understanding is a spectrum between two ends: complete empathy and making sense (44). In order for computers to "understand" and therefore be intelligent, they would have to be able to both interpret data and relate it in some way to their experience. Although computers are very good at interpreting and organizing data, and can be programmed to some extent to "learn," or modify their algorithms (methods) based on new data, empathy would require both emotion and consciousness, or self-awareness (Schank 44-46).

While current computers clearly do not have emotions or self-awareness, neuroscientists and computer engineers are currently collaborating to create a computer whose structure more closely resembles that of the human brain, with many parallel processors all interconnected much like neurons are interconnected in the brain. Cognitive scientists believe they have discovered that emotions are the results of neurotransmitters polarizing various neurons in the brain and are either positive or negative. Because of the binary nature of current digital computers, positive and negative could be easily represented, causing some AI experts to theorize that emotion could be implemented in computers (Nadeau 55). However, required technology and knowledge are missing from both the neurology and the computer engineering aspects of this possibility.

Some AI scientists have theorized that "consciousness could be an emergent property of a massively parallel computer system" (Nadeau 61-62) such as the emotion simulator mentioned above. These computer engineers and neuroscientists, in attempting to build such a self-aware system, fail to take into account that "consciousness has an existence that is somehow anterior to or separate from the neuronal activities of the brain" (Nadeau 159). Clearly, if we have not even discovered the physical organ or device that controls human consciousness (if it physically exists at all), and therefore do not fully understand it, we cannot attempt to set up such a device in a machine.

For us to make a machine that knows that it exists (a conscious machine), we would have to understand how we know that we exist. Thus while we may be able to simulate emotions or self-awareness, they will be only "skin" deep, and disappear once one looks past the programs it runs. There is no reason to believe that a machine that can simulate emotions will suddenly develop the consciousness that is central to humanity and intelligence. Such simulation is not necessary or desirable, however; instead of trying to force emotions or irrationality on an inherently unemotional, logical device, we would be better off leaving "values and key decisions" to humans (Hayes-Roth 112).

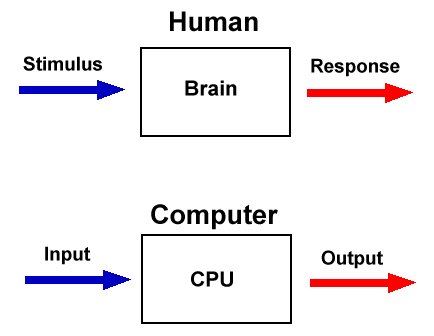

If the "central dogma" of AI -- that "what the brain does may be thought of at some level as a kind of computation" (Charniak 6) -- is wrong, then artificial intelligence cannot be intelligence at all. The Basic Model of AI states that humans react to stimuli based on certain processes in much the same way that computers turn input into output using a program (Figure 3). While for many low-level activities this model is accurate, the deeper one attempts to simulate, the clearer it becomes that "there are few but crucial differences that distinguish human beings from digital machines" (Fetzer 271).

If the "central dogma" of AI -- that "what the brain does may be thought of at some level as a kind of computation" (Charniak 6) -- is wrong, then artificial intelligence cannot be intelligence at all. The Basic Model of AI states that humans react to stimuli based on certain processes in much the same way that computers turn input into output using a program (Figure 3). While for many low-level activities this model is accurate, the deeper one attempts to simulate, the clearer it becomes that "there are few but crucial differences that distinguish human beings from digital machines" (Fetzer 271).

One main difference is that humans are not digital, as our entire thinking process is not based on numbers like computer thinking is, and thus is not really a computation at all. "People think as they (we) do because of innate genetic endowment, shaped in development by contact and culture. But unless a computer were constructed with desires and innate properties like those of people, it probably wouldn’t think exactly like us" (Waltz). There are obviously limits to the parallels between human and computer thought processes.

"Machines might be able to do some of the things that humans can do (add, subtract, etc.), but they may or may not be doing them in the same way that humans do them" (Fetzer 18). If a computer simply gets the same result with the same input that a human would, regardless of process, it is called simulation. Emulation, on the other hand, is getting the same result with a machine and using the same method a human would.

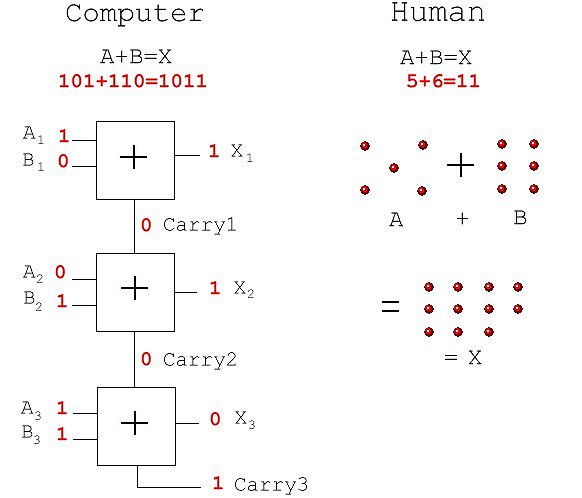

For example, computers simulate addition by translating numbers into binary numbers, translating binary numbers into electrical impulses, and then turning on and off other electrical impulses based on certain conditions. Humans, on the other hand, add either by visualizing, counting, memorization, or a combination of these operations (Figure 4). One way computers could emulate human addition would be to have a table of values for adding numbers zero through nine like humans memorize, and then rules for carrying and correct digit placement.

For example, computers simulate addition by translating numbers into binary numbers, translating binary numbers into electrical impulses, and then turning on and off other electrical impulses based on certain conditions. Humans, on the other hand, add either by visualizing, counting, memorization, or a combination of these operations (Figure 4). One way computers could emulate human addition would be to have a table of values for adding numbers zero through nine like humans memorize, and then rules for carrying and correct digit placement.

Although a computer can do some things that in a human would be considered intelligent, simulation programs do not "understand" what they are doing; they are simply crunching numbers by translating electricity. Therefore, if the computer programs don't parallel the human processes for the same data, the Basic Model is no longer valid, and the central dogma of AI is no longer relevant. Thus, current programs that base claims of "intelligence" on this dogma are not, in fact, intelligent.

VI. Conclusion

Through the analysis of computers’ capabilities in the various areas of intelligence, we can conclude that traditional computers are not and cannot be intelligent. Based on its definition, intelligence involves using reason to solve problems, and also gaining understanding through previous experience. Computers may seem to use reason and have understanding, but this alleged intelligence is only a façade hiding the clever code that the machine follows exactly. The machines’ artificial intelligence is just that-- artificial.

Reason is more than just logic; it also involves creativity (Johnson qtd. in Dreyfus xxi). While some programs may seem creative, they are really only following directions, incorporating some random data to make the results seem creative. However, as creativity involves the production of something original and inspired by emotion (Matthews 32), computers are not creative and therefore do not fully use reason to solve problems.

Just as reason is more than logic, understanding is more than simply making sense; it requires emotion and consciousness. Because of our lack of knowledge about our own emotions and consciousness, we cannot hope to create this kind of self-awareness in a machine. It is not enough for a machine to simply appear to be conscious; it must possess the fundamental qualities of self-awareness that are present only in life such as humans.

One of the main problems with any type of computer intelligence is its tendency to simulate rather than emulate. The entire justification for AI is the theory that humans think like computers calculate. The brain’s computations are entirely different from the computations of a computer. We cannot, therefore, assume that simply because a human action is intelligent, the computer equivalent of the action denotes intelligence.

While the media and extreme AI proponents claim that "if all knowledge can be formalized, then the human self can be matched . . . by a machine" (Bronowski qtd. in Nadeau 24), we see that mere knowledge and data crunching algorithms, essentially all that today's computers are capable of, do not equate to intelligence. Schank explains the only way computers could truly be intelligent:

Computers do only what they have been programmed to do, and this essential fact of computers will not change. Any intelligence computers may have will result from an evolution of our ideas about the nature of intelligence -- not as a result of advances in electronics. (Schank 7)

Although such human-like characteristics as creativity and consciousness may be simulated quite convincingly, underneath it all the computer is still the same, with no understanding and therefore no intelligence. This does not mean that there is no reason to continue AI work; machines that act with seeming intelligence are often good enough for many applications. We simply should realize that "the time has come to face the possibility that it [machine intelligence] will never be realized with a digital computer" (Wilkes 17).

VII. Sources Cited

Allen, James F. "AI Growing Up", AI Magazine Winter 1998: 13-23.

Charniak, E. et al. Introduction to Artificial Intelligence. Reading, MA: Addison-Wesley, 1985.

Dreyfus, Hubert L. What Computers Still Can't Do. Cambridge, MA: The MIT Press, 1992.

Fetzer, James H. Artificial Intelligence: Its Scope and Limits. Dordrecht, The Netherlands: Kluwer Academic Publishers, 1990.

Hayes-Roth, Frederick. "Artificial Intelligence: What Works and What Doesn't?." AI Magazine Summer 1997: 99-113.

Matthews, Robert. "Computers at the Dawn of Creativity." New Scientist Dec. 1994: 30-34.

Nadeau, Robert. Mind, Machines, and Human Consciousness: Are There Limits to AI?. Chicago: Contemporary Books, Inc., 1991.

Schank, R. The Cognitive Computer. Reading, MA: Addison-Wesley, 1984.

Seufert, Wolf. "A Measure of Brains to Come." New Scientist Oct. 1994: 53-54.

Waltz, David. Interview. "Artificial Intelligence." URL:http://tqd.advanced.org/2705/waltz.html (10 Mar. 1999).

Wilkes, Maurice V. "Artificial Intelligence as the Year 2000 Approaches." Association for Computing Machinery Communications of the ACM Aug. 1992: 17-18.